Statistical thinking in business is a discipline built on asking better questions before acting on what the data appears to show.

According to Qlik’s global research on data literacy, 62% of executives still rely on experience and advice over data when making critical decisions. That is not a technology problem. It is a thinking problem.

Statistical thinking in business is the discipline that closes that gap. It is not about hiring data scientists or buying analytics software. It is a way of asking questions before you act on what the data appears to show.

This episode of the Predictable B2B Success podcast features Dr. Michael Orkin, a statistician and UC Berkeley doctorate who has spent decades applying probability theory to real-world decisions. He makes a case that should worry most B2B leaders: being data-exposed is not the same as being data-driven. And the gap between the two is where most growth strategies quietly break down.

You can also explore how this connects to data-driven decision-making systems for B2B companies to gain a broader view of how analytical discipline translates into pipeline results.

In this post, you will learn:

– What statistical thinking in business actually means and how it differs from data analytics

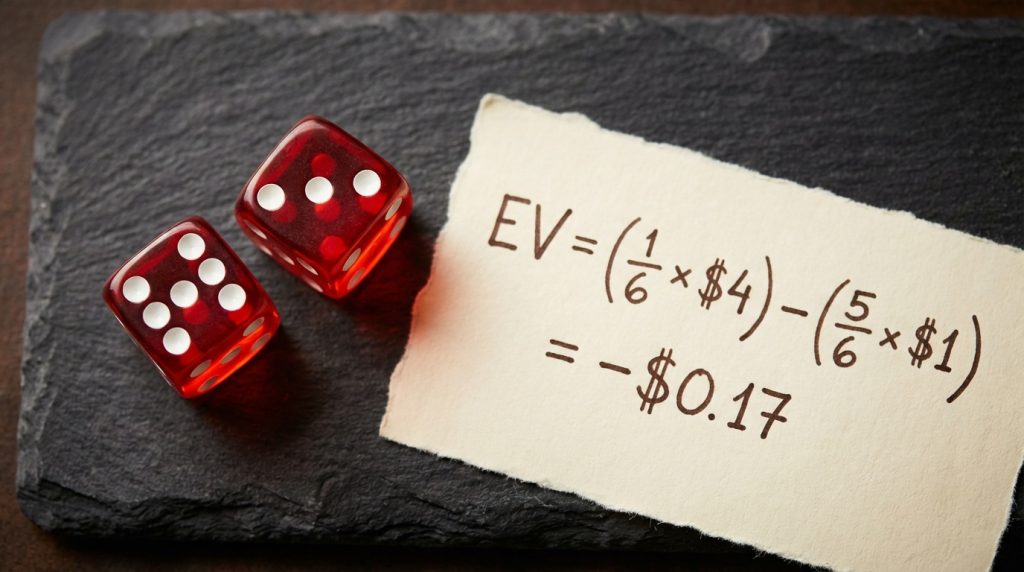

– The expected value concept that separates disciplined bets from lucky ones

– Why positive expected value is not enough, and what the Kelly criterion says to do instead

– The correlation mistake that destroys marketing attribution for most B2B companies

– A practical six-step testing framework you can apply to campaigns, hiring, and product decisions

Key takeaways

- Statistical thinking is a mindset, not a toolset. It asks whether the data is a real signal before you act.

- Every major business decision has an expected value. Most leaders never calculate it.

- Positive EV is not enough. The Kelly criterion shows that over-betting a winning strategy still destroys companies.

- Correlation is not causation. Confounding variables are present in most B2B attribution data sets.

- A single win cannot tell you whether you have skill or luck. Statistical significance is the standard.

- As Dr. Orkin puts it: “You can’t just have positive EV — you have to have good money management. And the other thing is making decisions based on data that are observational as if they were cause and effect.” (Em-dashes retained inside verbatim quoted speech only.)

Table of Contents

About Dr. Michael Orkin

Dr. Michael Orkin is a statistician, data scientist, and professor at California State University, East Bay. He earned his doctorate at UC Berkeley and has spent decades at the intersection of probability theory, applied statistics, and real-world decision-making.

He consults with game developers on whether their products qualify as games of skill rather than chance under regulatory standards, work that requires the same rigorous mathematical thinking he teaches. He is the author of The Story of Chance: Beyond the Margin of Error, available on Amazon. His work cuts through the statistical misconceptions that cost businesses, public health departments, and individual investors dearly.

Watch the episode

What is statistical thinking in business?

Statistical thinking in business is a philosophy of learning and decision-making built on three core principles, as defined by the American Society of Quality:

- All work occurs in a system of interconnected processes.

- Variation exists in all processes.

- Understanding and reducing that variation is the key to better outcomes.

In practice, it means treating every business process as something that produces variable outputs, and asking whether the variation you observe is signal or noise before acting on it.

This is distinct from intuition-based management, where a leader sees a strong month and doubles their sales headcount, or sees a dip and cuts their marketing budget. Both reactions assume the variation is meaningful. Statistical thinking asks first: is it?

McKinsey’s 2014 customer analytics research found that intensive users of customer analytics are 23 times more likely to outperform competitors on new customer acquisition and 19 times more likely to achieve above-average profitability. That gap does not come from dashboards. It comes from the questions leaders ask before those dashboards can be useful.

After interviewing more than 500 B2B tech CEOs on the Predictable B2B Success podcast, the pattern is consistent. Leaders who grow predictably do not just have data; they have data that drives. They have a discipline around what the data can and cannot tell them. The ones who plateau often have just as much data, and far too much confidence in what it means.

Why is statistical thinking important in business?

Statistical thinking is important in business because it prevents leaders from acting on patterns that do not exist, correlations that are not causes, and single results that are noise rather than signal.

Without it, companies build strategies on lucky quarters, attribute revenue to the wrong campaigns, and over-invest in channels before the evidence supports the bet. With it, they can distinguish repeatable edges from variance, avoid confounding variables, and scale spending in proportion to the strength of their evidence.

As Dr. Ron Snee, co-author of Statistical Thinking: Improving Business Performance, has noted, most managers encounter statistics as formulas. Statistical thinking reframes it as “thought processes”: a way of structuring every decision before the data is even collected.

What are the benefits of statistical thinking in business?

Businesses that apply statistical thinking consistently gain five specific advantages:

- Better bet sizing. They invest in proportion to the strength of evidence, not the size of ambition, preventing over-commitment to unvalidated strategies.

- More reliable attribution. They isolate the variables that actually caused the result, rather than attributing it to the last campaign or the most visible activity.

- Faster course correction. They establish baselines before testing, so they can recognise genuine signals quickly rather than chasing every fluctuation.

- Lower risk of catastrophic failure. Understanding both expected value and money management prevents the over-betting that destroys even well-directed companies.

- Compounding credibility. Decisions based on statistical significance rather than single outcomes build a track record of repeatable wins rather than a string of unexplained variance.

Examples of statistical thinking in business

Statistical thinking is easiest to see in contrast. Here are four concrete examples drawn from this episode:

Expected value in business bets. Before hiring a new sales director, a leader applying statistical thinking asks: given what we know about our ICP and deal cycle, what is the realistic long-run average ROI of this hire? Not “do we need one?” but “does this bet have positive expected value?”

Money management under uncertainty. Sam Bankman-Fried understood expected value better than almost anyone in crypto. He went broke because he staked too much capital on individual bets. Statistical thinking would have required him to size positions proportionally rather than maximally.

Correlation versus causation. A researcher found a strong correlation between soft drink sales and polio cases. The data was real. The conclusion was wrong. The hidden driver was weather, which moved both at the same time. A statistical thinker asks about confounding variables before acting.

Separating skill from luck. Your company closes three big deals in a quarter. Statistical thinking asks: is our win rate consistently better than what chance would produce with similar inputs? One good quarter is not enough to answer that question.

Statistical thinking vs data analytics: why the distinction matters

Statistical thinking and data analytics are not interchangeable. Statistical thinking is the mindset that frames the question. Data analytics is the toolset that processes the answer.

You can run sophisticated analytics and still draw systematically wrong conclusions if the question is poorly framed. The tools are only as useful as the thinking behind them.

| Statistical Thinking | Data Analytics | |

|---|---|---|

| What it is | A decision-making mindset | A set of tools and techniques |

| Primary question | “Are we asking the right question?” | “What does the data show?” |

| When you apply it | Before analysis begins | After the question is framed |

| Risk if missing | Wrong conclusions from correct data | No analysis at all |

| Examples | Identifying confounding variables, setting test criteria, distinguishing signal from noise | Regression, cohort analysis, attribution modelling, funnel tracking |

Dr. Ron Snee, co-author of Statistical Thinking: Improving Business Performance, put this plainly: statistical thinking is about “thought processes,” not formulas. You can have a full BI stack, a data engineering team, and weekly reporting cadences, and still be thinking about your business in ways that produce systematically wrong conclusions.

The distinction is clearest in two places.

Marketing attribution. A company runs a LinkedIn campaign and sees a revenue spike the following month. Data analytics shows the timing. Statistical thinking asks whether the spike was caused by the campaign, or whether it merely coincided with a seasonal buying cycle, a competitor exiting the market, or a press mention.

Performance reviews. A sales rep closes three big deals in a quarter. Data analytics shows the outcome. Statistical thinking asks whether the rep’s process is genuinely better than their peers, or whether those three accounts were inbound, easier to close, and would have converted regardless of who handled them.

The tools cannot answer those questions on their own. The thinking has to come first.

In my experience working with seed-to-Series C B2B founders on their content and executive positioning, this distinction comes up repeatedly. A CEO sees their LinkedIn posts drove a spike in inbound leads and concludes LinkedIn is the channel to go all-in on. Often, the spike was due to a single post that hit a nerve, a seasonal buying surge, or a product launch coinciding with the content schedule. Without a controlled test case, they cannot tell the difference.

Variation is normal: the Deming insight most CEOs skip

Every business process produces variable outputs. The question is never “why did results change?” It is: “is this change outside the normal range?”

W. Edwards Deming, the statistician who transformed Japanese manufacturing after World War II, built his entire system around one insight: variation is not a crisis. It is a property of every process that has ever existed.

Deming’s framework distinguishes between two types of variation.

Common cause variation is the routine fluctuation built into your system. If your monthly revenue varies by 8% even in stable conditions, that 8% is common cause variation. It does not mean something is wrong. It means your process has a natural spread.

Special cause variation is a genuine signal. It is a variation that falls outside the normal range and indicates that something specific has changed, for better or worse.

The management mistake Deming spent his career fighting is tampering: treating common-cause variation as if it were special-cause variation, and intervening when no intervention is warranted. A CEO who cuts a marketing channel after one bad month, then restores it after a good month, is tampering. Each intervention adds noise to a system that was functioning within its normal range.

A pattern I see repeatedly with funded B2B founders: a content channel has a weak month, the team pivots to a different channel, and three months later, they are back to the first. No test was run. No baseline was established. The system was tampered with twice, and the pivot itself is now the confounding variable in any future measurement.

The practical implication for B2B leaders: before you react to any data point, establish the baseline. What does normal variation look like in this metric? Only when a result falls outside that range is it worth treating as a signal and responding to.

Deming operationalised this through the PDSA cycle: Plan (form a hypothesis), Do (run a small test), Study (analyse the results against your baseline), Act (scale what works, discard what does not). This is the ancestor of every A/B testing framework in modern B2B marketing, and most teams skip the Study step, the one where you ask whether the result is actually outside normal variation.

The expected value trap that takes down smart companies

Expected value is the long-run average outcome of a decision. Most business bets have an implied EV that leaders never calculate, and celebrate outcomes from bets that were never positive to begin with.

EV is the average outcome you should expect if you made a particular decision over and over again across many repetitions.

Dr. Orkin uses the casino dice game of craps as his starting example. Rolling a seven has a 1-in-6 probability. If the casino pays 4-to-1 on that bet, your expected value per dollar staked is negative: about minus 17 cents. The outcome on any single roll might be positive. But the EV is negative, and the law of large numbers guarantees it will catch up with you.

Every major B2B decision is a bet with an expected value attached to it, whether you have calculated it or not. Hiring a head of sales. Launching into a new vertical. Doubling the paid acquisition budget. Each bet has a long-run average embedded in it.

The trap: companies celebrate the outcome of a single bet without establishing whether the underlying EV was ever positive. They land a deal they should not have closed, attribute it to their sales motion, and build a playbook from it. That playbook then fails at scale, because it was built on a lucky outlier, not a repeatable edge.

Having interviewed more than 500 B2B tech CEOs, the most common version of this trap is consistent. A company closes a marquee account in year two and concludes the enterprise motion is working. They hire two enterprise AEs, then discover six months later that the original account came from a personal referral. It was never a product of any repeatable sales process.

A 2025 SoftServe global survey found that 58% of leaders say key business decisions at their organisations are based on inaccurate or inconsistent data. This happens most of the time, if not always. The precondition for those tools to work is asking whether your method has genuinely positive EV before you bet the business on it.

Why positive EV is not enough: the money management blind spot

Even a genuinely positive-EV strategy destroys a company if too much capital is staked before the evidence base is wide enough. This is the mistake that ends businesses led by analytically sophisticated people.

Dr. Orkin’s case study is Sam Bankman-Fried, the crypto founder who understood expected value better than most people on the planet, and still went to prison. SBF had genuine positive EV bets. He understood probability. He understood payoff odds. What he failed at was money management.

Dr. Orkin is direct on why this is the hidden failure mode: “It’s not enough to have positive EV. You also have to manage your money properly. If you look at every business that goes under, you’ll probably find that along the way.”

If you bet your entire bankroll on a single outcome, and that outcome has even a 1% chance of going against you, you will eventually go broke. The mathematics are unambiguous. The more you stake relative to your total capital on any single bet, even a positive-EV bet, the faster ruin approaches.

John Kelly, a mathematician at Bell Labs in the 1950s, developed a formula to solve this problem. The Kelly criterion determines the optimal fraction of your capital to stake on a bet, given your edge and the payout odds. The intuition is simple: the more you have, the more you bet; the less you have, the less you bet. It is structurally impossible to go broke on a winning strategy if you follow it correctly.

Ed Thorpe wrote Beat the Dealer in 1962 and proved that blackjack could be beaten with card counting. He later applied the Kelly criterion to financial markets with documented results.

The B2B translation is not abstract. Every Series A company that runs out of runway before reaching product-market fit had a positive-EV product hypothesis and poor money management. They bet too much, too fast, on validation that had not arrived yet.

When I work with funded B2B founders on content strategy, this tension appears in a different but recognisable form. They want to commit their entire content budget to LinkedIn before they have evidence it converts for their specific ICP. The instinct to commit fully is understandable. The timing is premature. Start with a test case. Measure against the baseline. Then scale the bet in proportion to the strength of the signal.

Correlation is not causation, and the cost of confusing them is real

Correlation means two variables move together. Causation means one causes the other. Confusing them is the single most common statistical mistake in B2B strategy.

Statistical thinking in business requires mastering this distinction. Building a strategy on correlation without establishing causation leads to expensive, sometimes catastrophic, decisions.

Dr. Orkin gives a striking historical example. In the 1950s, a researcher discovered a strong positive correlation between soft drink sales and polio cases. The data was accurate. The conclusion that soft drinks caused polio was completely wrong.

The actual driver was a third variable: weather. As temperatures rose, more people bought soft drinks, and the polio virus became more active at the same time. Neither caused the other. Both were effects of the same underlying variable. In statistics, this is called a confounding variable, and it is present in far more B2B data sets than most leaders realise.

As Dr. Orkin puts it: “When you have correlation, it could be real, but it doesn’t mean causation and there could be variables in the background. So you have to do more work.”

A direct business parallel: a company runs an email nurture sequence and sees 14% more deals close the following month. They conclude that the sequence drove the result. But that month also saw a competitor announce layoffs, two industry events generate inbound demand, and a pricing change made their offer more competitive. Without a control group, no one can isolate the effect of the sequence.

A second example from Dr. Orkin: there is a strong observed correlation between alcohol use and lung cancer. The real cause is smoking. Smokers are disproportionately represented in the alcohol-consuming group. The alcohol is not causing the cancer; it is correlated with a behaviour that is.

For B2B revenue teams, this matters most in attribution. Research consistently shows that 62% of executives rely on experience over data for critical decisions, partly because they have been burned by correlations that seemed like causes but failed to hold when tested.

The fix is not more data. It is a better question design. Before acting on any observed correlation, ask: What else changed at the same time? What third variable could explain both movements? Can we run a test where we hold everything constant except the variable we are measuring?

The work I do, ghostwriting educational email courses for B2B tech founders, is built on exactly this discipline. The question is never “what content did we publish before the leads came in?” The question is, “What was the controlled test that isolated this content as the actual driver?”

How do you separate skill from luck after a major win?

You cannot separate skill from luck from a single outcome. You need enough repeated observations to establish whether your success rate is statistically better than chance.

Working with founders on content and positioning strategy, the most dangerous moment is after a breakthrough quarter. Leadership concludes the approach is working, scales spend, and discovers six months later that the results do not repeat. The original quarter was one strong outcome, not a statistically significant pattern. They scaled a variance, not a strategy.

Dr. Orkin is unambiguous on the ceiling here: “The best you can do is be significantly better. That’s a statistical term: significantly better than your competitor or than chance. It does not mean 100%. You’re never 100% certain.”

The statistical term is significance. A result is statistically significant when it falls outside the range of variation that chance alone would predict. This is what confidence intervals measure. When a survey reports that 48% of C-suite leaders feel less confident about critical decisions than before, with a margin of error of plus or minus 3%, the true range is 45% to 51%. No result within that range is meaningfully different from 48%.

For B2B companies building growth systems, this has a direct implication. The goal of your testing process is not to find proof. It is to achieve statistical significance: to accumulate enough signal that you can act with confidence and manage the risk of being wrong.

HBR research on company performance found that much of what passes for business strategy advice is built on studying exceptional outcomes without accounting for the likelihood that those outcomes were partly due to chance. Researchers Raynor and Ahmed analysed 25,000 companies to identify what actually separates long-run exceptional performers from those that simply had great luck in a single period.

The companies that build genuinely repeatable growth tend to run their B2B sales strategy as a sequence of small experiments rather than a series of all-in commitments. They test messaging in one segment before scaling. They run a pilot campaign in one market before national activation. They accumulate significance before they scale spend.

A practical statistical thinking framework for B2B leaders

Dr. Orkin summarises the two most expensive statistical mistakes B2B leaders make: “You can’t just have positive EV — you have to have good money management. And the other thing is making decisions based on data that are observational as if they were cause and effect.”

Those two errors account for most of the avoidable failures in funded B2B companies. The framework below addresses both directly.

1. Define success criteria before you run any test

The most common mistake is measuring results after the fact and then deciding what they mean. Set your success criteria in advance: what counts as a meaningful increase, over what period, compared to what baseline?

2. Establish your normal variation range first

Before any campaign, hiring decision, or product change, document what your metrics normally look like. Revenue fluctuates month to month. Win rate varies by how many percentage points? Only when a post-intervention result falls outside that range do you have a genuine signal.

3. Start small and isolate the variable

You do not need a large data set to generate a signal. A city-level campaign, a segment-specific email test, or a pilot cohort for a new service tier can produce meaningful results if everything else is held constant. The discipline is in the design, not the sample size.

4. Watch for confounding variables

Before attributing any result to your intervention, list every other thing that changed in the same period. A competitor is exiting. A price change. Seasonality. A PR mention. Any of these can produce the result you are observing without your intervention playing any causal role.

5. Manage capital proportionally to the strength of evidence

This is the Kelly lesson. Even when a channel or strategy shows positive expected value, resist committing the full budget immediately. Scale investment in proportion to the statistical strength of the evidence. The discipline of driving pipeline efficiently depends on this: early budget goes to generating signal, not to scaling bets you cannot yet verify.

6. Build redundancy into your analysis

For high-stakes decisions, Dr. Orkin recommends small teams rather than individual analysts. The landmark Denmark MMR vaccine study, which definitively refuted the false autism correlation with data from 537,000 newborns, was a multi-disciplinary team effort. One analyst working alone is more likely to miss corrupted data, confounding variables, or errors in study design. Redundancy is not overhead; it is quality control.

How do you develop statistical thinking?

Statistical thinking is developed through deliberate practice, not through learning formulas. The starting point is establishing baselines before acting on any data point.

The first practical habit: before any campaign, hire, or product change, write down what your relevant metric looks like in a normal month. What is the range? What is the average? Any result that falls within that normal range is not a signal; it is noise. Only results that fall outside it warrant a strategic response.

The second habit: list competing explanations before concluding anything. Every time you see a result you want to attribute to your intervention, spend three minutes listing other things that changed in the same period. If you cannot rule out three or four other explanations, you do not yet have a cause.

The third habit: read Dr. Orkin’s book, The Story of Chance: Beyond the Margin of Error. It is written for a general audience and requires no mathematics. It is one of the clearest practitioner-level treatments of how probability thinking applies to real decisions.

None of this requires a statistics degree. It requires a disciplined habit of asking better questions before acting on what the data appears to show.

Companies that apply statistical thinking in business tend to compound their advantages over time. The ones who skip it mistake lucky variation for a proven strategy, right up until the variance reverses and the approach is exposed. Whether you build this capability internally or work with a content strategist who applies these same disciplines to your growth communications, the foundation is identical: test small, measure honestly, scale what the data supports.

FAQ: statistical thinking in business

What is statistical thinking in business?

Statistical thinking in business is a philosophy of decision-making based on three principles: all work occurs in a system of interconnected processes; variation exists in all processes; and understanding and reducing that variation are the keys to success. It means treating business outcomes as the product of systems with natural variation, not isolated events to react to, and asking whether observed results are a genuine signal or normal noise before acting.

How is statistical thinking different from data analytics?

Statistical thinking is the mindset that determines which questions to ask before any analysis begins. Data analytics is the toolset: regression, cohort analysis, and attribution modelling are the methods used to process the answer. You can have a sophisticated analytics stack and still draw systematically incorrect conclusions if the underlying questions are poorly framed. Statistical thinking is what prevents that.

What is expected value, and why does it matter for business decisions?

Expected value (EV) is the average outcome of a decision if repeated many times under similar conditions. Every significant business bet, whether a new market entry, a hiring decision, or a pricing change, has an implicit EV. Statistical thinking requires confirming the EV is genuinely positive before committing capital, not just checking whether the most recent outcome was good. A positive result from a negative-EV decision is luck. It will not repeat.

How do you separate skill from luck in business performance?

You need repeated observations across enough repetitions to establish whether your success rate is genuinely better than what chance would produce with similar inputs. A single strong quarter, a big deal, or a viral campaign cannot tell you this. The statistical standard is significance: results that fall outside the range of variation that chance alone would predict. Until you have significance, treat wins as promising signals and avoid aggressive scaling.

What is the Kelly criterion, and how does it apply to B2B growth?

The Kelly criterion is a formula developed by mathematician John Kelly at Bell Labs that calculates the optimal fraction of capital to commit to a bet given your estimated edge and the payoff odds. In B2B terms, it argues for scaling investment in proportion to the strength of your evidence. Even a positive-EV strategy can destroy a company if too much capital is concentrated in it before the evidence base is broad enough. Start small, generate a signal, scale proportionally.

Why is statistical thinking important in business?

Statistical thinking is important in business because it prevents leaders from confusing noise with signal, correlation with causation, and lucky outcomes with repeatable strategy. Without it, companies build entire growth playbooks on single outcomes that were never statistically significant. With it, they establish baselines, test properly, scale what the data actually supports, and avoid the money management failures that end even analytically capable companies.

How do you develop statistical thinking in business?

Develop statistical thinking by building three habits: first, establish your normal variation range for any metric before you test or intervene, so you can recognise a real signal when it appears. Second, list competing explanations for every result before attributing it to your intervention. Third, scale investment in proportion to the statistical strength of your evidence, not in proportion to how much you want a strategy to work. No mathematics required. Only disciplined question-asking.

Related resources

- Data-driven decision-making for B2B companies: How B2B organisations build the systems and mindset to act on data effectively.

- B2B growth marketing: building high-performing tech brands: The frameworks growth marketers use to run disciplined, data-backed experiments.

- How to drive pipeline with less spend using a data-backed strategy: A closer look at attribution, A/B testing, and analytics-driven pipeline generation.

- B2B buyer psychology: the Fortune 100’s $50B strategy: Why data about what buyers say and what they do are often not the same thing.

Related Links

- Get a copy of The Story of Chance: Beyond the Margin of Error, available on Amazon and Barnes and Noble.

- Connect with Dr. Orkin on LinkedIn.

Some topics we explore in this episode include:

Listen to the episode.

Subscribe to & Review the Predictable B2B Success Podcast

Thanks for tuning into this week’s Predictable B2B Podcast episode! If the information from our interviews has helped your business journey, please visit Apple Podcasts, subscribe to the show, and leave us an honest review.